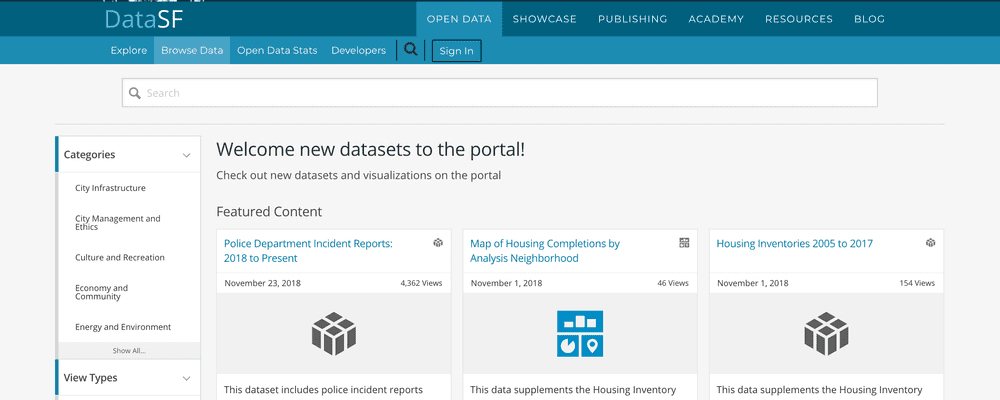

SF Open Data Program

Program goals

The Open Data Program is really many projects supporting timely data made easily available to City departments and the public. This means data is offered:

- At no-cost

- With permissive licensing

- In machine readable formats including via Application Programming Interfaces (APIs)

In addition, the open data program supports:

- Improved knowledge of data assets

- Data governance around those assets

- Increased use of data in decision-making

My role was to identify and implement continuous improvements to the program in support of DataSF’s mission to empower use of the City’s data.

Approach

Our overriding approach at DataSF is to use lean, continuous improvement cycles to plan, do, check, and act on deliverables.

The open data program projects happen within several work streams that support the goal of timely data made easily available. These are:

- publishing support and automation services,

- data coordination, governance and quality management, and

- user support and training

Below, I’ll highlight some projects I delivered to support open data.

- Develop open data publishing process. To accommodate a federated data environment, we needed to develop a publishing process that DataSF could use to manage and monitor for ongoing improvements; all within a constrained budget. I developed, with the team, an intake process and standardized work for developing data pipelines. We use a Trello board to manage the work and data about the process is automatically captured for rollup into a PowerBI dashboard for planning and monitoring. We documented the “open data operating manual” as part of a 4 part blog series.

- Inventory data citywide. State and local law requires an inventory of City systems and datasets updated at least annually. I have managed and improved the inventory process each year since the first one in 2015. I work with 50+ data coordinators in departments to collate and update the inventory. This includes developing the templates, processes and guidance to support the updates. I developed scripts to populate templates with existing inventoried data and then to ingest back into Airtable. I also developed scripts to sync the inventories of systems and datasets to the open data portal.

Outcomes

- 136% increase in available data on the portal between August 2014 and October 2019. Providing consistent, centralized publishing and data pipeline development helped increase available data on the portal

- 96% of datasets published with APIs. Before the publishing program was established, only about 35% of open datasets had APIs, the remainder were posted as external links to files. This has improved accessibility to and reuse of data.

- 84% of datasets amenable to automation automated. Timely data is important. We built out an automation program to support the timely publishing of data.

Out in the world

You and the @datasf team are making this possible - so many thanks!

— SF City Scorecards (@SFCityScorecard) December 1, 2018

Top State & Local Websites... We’re excited to be included along w/ @DataSF, @govtechnews, @Microsoft_Gov, @NASCIO, @Oracle, @PewStates, @TechSoup4Libs, @GOVERNING, and @smartcitiesdive https://t.co/p2ITggaYq5

— ELGL (@ELGL50) November 8, 2018

.@datasf is on a sharing roll (per usual). 🙌🏼 Great resources being shared on how to think about and run #open data programs for #OpenDataDay! https://t.co/5MpA6dorKY

— Lilian Coral (@lcoral) March 3, 2018

https://t.co/awJQT0EUBr has many educational resources so more ppl can learn how to use the #opendata @synchronouscity @DataSF

— Exygy (@exygy) April 28, 2017

.@SeattleCTO praises @DataSF resources. https://t.co/Rr7wmQpIWY I agree. (Ours rock too, of course https://t.co/DsbyiCFzaT ). #cfasummit

— Andrew Nicklin (@technickle) November 2, 2016